iPhoneAlle anzeigen

iPhone 11iPhone 11 ProiPhone 11 Pro MaxiPhone 12iPhone 12 ProiPhone 12 Pro MaxiPhone 12 miniiPhone 13iPhone 13 ProiPhone 13 Pro MaxiPhone 13 miniiPhone 14iPhone 14 PlusiPhone 14 ProiPhone 14 Pro MaxiPhone 15iPhone 15 PlusiPhone 15 ProiPhone 15 Pro MaxiPhone 16iPhone 16 PlusiPhone 16 ProiPhone 16 Pro MaxiPhone 16eiPhone 8iPhone 8 PlusiPhone SE 2020iPhone SE 2022iPhone XiPhone XRiPhone XSiPhone XS Max

Galaxy A-SerieAlle anzeigen

Galaxy A12Galaxy A13Galaxy A13 5GGalaxy A14Galaxy A15Galaxy A16Galaxy A17 5GGalaxy A20eGalaxy A21Galaxy A22Galaxy A23Galaxy A24Galaxy A25Galaxy A26 5GGalaxy A32Galaxy A33Galaxy A34Galaxy A35Galaxy A36 5GGalaxy A40Galaxy A41Galaxy A42Galaxy A50Galaxy A51Galaxy A52Galaxy A53Galaxy A54Galaxy A55Galaxy A56 5GGalaxy A70Galaxy A71Galaxy A72

Redmi SeriesAlle anzeigen

Redmi 10Redmi 13CRedmi 15 5GRedmi Note 10Redmi Note 10 ProRedmi Note 11Redmi Note 11 ProRedmi Note 11 Pro Plus 5GRedmi Note 11sRedmi Note 12Redmi Note 12 5GRedmi Note 12 ProRedmi Note 12 Pro 5GRedmi Note 12 Pro PlusRedmi Note 13Redmi Note 13 5GRedmi Note 13 ProRedmi Note 13 Pro PlusRedmi Note 14Redmi Note 14 Pro PlusRedmi Note 8Redmi Note 8 ProRedmi Note 9Redmi Note 9 Pro

- Startseite

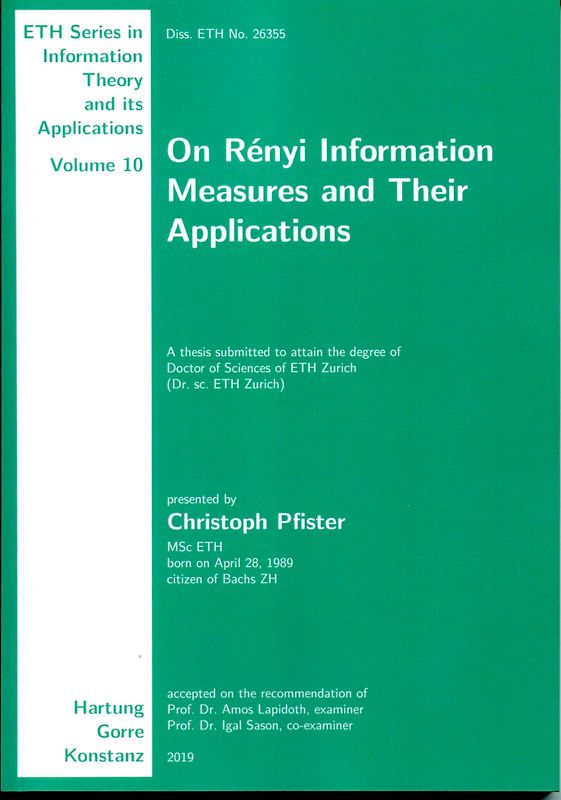

Bücher Englische Bücher Wissen & Bildung Naturwissenschaft, Medizin, Informatik, Technik Informatik & EDV Datenkommunikation & Netzwerke On Rényi Information Measures and Their Applications

Derzeit nicht verfügbar

Handgeprüfte Gebrauchtware

Bis zu 50 % günstiger als neu

Der Umwelt zuliebe

On Rényi Information Measures and Their Applications

Christoph Pfister (Unbekannter Einband, Englisch)

★★★★★

☆☆☆☆☆ Keine Bewertungen vorhanden

Optischer Zustand

Beschreibung

The solutions to many problems in information theory can be expressed using Shannon’s information measures such as entropy, relative entropy, and mutual information. Other problems—for example source coding with exponential cost functions, guessing, and task encoding—require Rényi’s information measures, which generalize Shannon’s.

The contributions of this thesis are as follows: new problems… related to guessing, task encoding, hypothesis testing, and horse betting are solved; and two new Rényi measures of dependence and a new conditional Rényi divergence appearing in these problems are analyzed.

The two closely related families of Rényi measures of dependence are studied in detail, and it is shown that they share many properties with Shannon’s mutual information, but the data-processing inequality is only satisfied by one of them. The dependence measures are based on the Rényi divergence and the relative α-entropy, respectively.

The new conditional Rényi divergence is compared with the conditional Rényi divergences that appear in the definitions of the dependence measures by Csiszár and Sibson, and the properties of all three are studied with emphasis on their behavior under data processing. In the same way that Csiszár’s and Sibson’s conditional divergence lead to the respective dependence measures, so does the new conditional divergence lead to the first of the new Rényi dependence measures. Moreover, the new conditional divergence is also related to Arimoto’s measures.

The first solved problem is about guessing with distributed encoders: Two dependent sequences are described separately and, based on their descriptions, have to be determined by a guesser. The description rates are characterized for which the expected number of guesses until correct (or, more generally, its ρ-th moment for some positive ρ) can be driven to one as the length of the sequences tends to infinity. The characterization involves the Rényi entropy and the Arimoto–Rényi conditional entropy.

The related second problem is about distributed task encoding: Two dependent sequences are described separately, and a decoder produces a list of all the sequence pairs that share the given descriptions. The description rates are characterized for which the expected size of this list (or, more generally, its ρ-th moment for some positive ρ) can be driven to one as the length of the sequences tends to infinity. The characterization involves the Rényi entropy and the second of the new Rényi dependence measures.

The third problem is about a hypothesis testing setup where the observation consists of independent and identically distributed samples from either a known joint probability distribution or an unknown product distribution. The achievable error-exponent pairs are established and the Fenchel biconjugate of the error-exponent function is related to the first of the new Rényi dependence measures. Moreover, an example is provided where the error-exponent function is not convex and thus not equal to its Fenchel biconjugate.

The fourth problem is about horse betting, where, instead of Kelly’s expected log-wealth criterion, a more general family of power-mean utility functions is considered. The key role in the analysis is played by the Rényi divergence, and the setting where the gambler has access to side information motivates the new conditional Rényi divergence. The proposed family of utility functions in the context of gambling with side information also provides another operational meaning to the first of the new Rényi dependence measures. Finally, a universal strategy for independent and identically distributed races is presented that asymptotically maximizes the gambler’s utility function without knowing the winning probabilities or the parameter of the utility function. Dieses Produkt haben wir gerade leider nicht auf Lager.

Handgeprüfte Gebrauchtware

Bis zu 50 % günstiger als neu

Der Umwelt zuliebe

Technische Daten

Erscheinungsdatum

31.01.2020

Sprache

Englisch

EAN

9783866286634

Herausgeber

Hartung-Gorre

Serien- oder Bandtitel

ETH Series in Information Theory and its Applications

Sonderedition

Nein

Autor

Christoph Pfister

Seitenanzahl

174

Auflage

1

Einbandart

Unbekannter Einband

-.-

★★★★★

☆☆☆☆☆ Leider noch keine Bewertungen

Leider noch keine Bewertungen

Sicher bei rebuy kaufen

Schreib die erste Bewertung für dieses Produkt!

Wenn du eine Bewertung für dieses Produkt schreibst, hilfst du allen Kund:innen, die noch überlegen, ob sie das Produkt kaufen wollen. Vielen Dank, dass du mitmachst!

Sicher bei rebuy kaufen